People & Privacy

Throughout his research, including the study regarding those who pledged to give up Facebook for 99 days, Baumer found that many people had different privacy concerns. Some didn’t feel they had control over what their friends saw about them and that made them uncomfortable. Others didn’t like that Facebook was monetizing data about their social interactions, which Baumer says is fundamentally a different kind of privacy concern.

“There were several studies where people voiced different types of privacy concerns and there was this concern about ‘What’s being done with my data?’” Baumer says. “That was what got me into this strand of thinking about algorithmic aspects of data privacy.”

Those thoughts, and an NSF-funded collaborative grant, helped lead him to collaborate with Andrea Forte, associate professor of information science at Drexel University, on data privacy. Specifically, they are researching how people navigate a world in which data is collected at every turn and how systems can be better designed to support those behaviors.

One thing that interests Baumer as far as data privacy is concerned is the notion of obfuscation. In Obfuscation: A User’s Guide for Privacy and Protest by Finn Brunton and Helen Nissenbaum, which helped fuel his interest, Baumer says, the authors argue that in modern society, you don’t have the option to opt out.

“Non-use isn’t viable,” Baumer says, summarizing the authors’ thoughts. “You have to have a driver’s license and you probably have to have a credit card. You can’t do everything with cash. You need whatever your country’s equivalent of a social security number is. So [Brunton and Nissenbaum] argue, for people who are opposed to these schemes of data collection and analysis, the only means of recourse is obfuscation—doing things that conceal your data or conceal details within your data.”

Baumer says Brunton and Nissenbaum compare this to throwing flak in old radar systems such as in World War II when planes released shards of aluminum. One blip on radar—the plane—becomes dozens of blips, making it impossible to tell which one is the plane. The strategy—create noise so it’s hard to find the actual data—is used today in a tool called TrackMeNot. That tool periodically pings Google with random search queries so someone looking at a user’s search history won’t be able to tell what the user actually searched for and what the tool searched.

This all led Baumer to ponder why people think such a tool does anything for them.

“What are the mental models for how these algorithmic inferential systems work, and how do those play into the strategies that people are using?” Baumer asks. “That’s a large part of what we’re trying to look at there.”

Like Baumer’s participatory design process, the initial plan for his research with Forte laid out three phases for the study, with the team starting the project by conducting interviews to try and understand people’s strategies and mental models. This included discovering demographic differences between those who attempt to avoid algorithms and other internet users.

Next, they would try to develop some type of survey instrument to study the different strategies people use to avoid tracking technologies and determine what algorithm avoidance actually looks like. Users, based on their habits, would be put into a typology. Finally, the team would create a collection of experimental prototypes to explore the design space around algorithmic privacy and find out how designers can create systems that are useful based on how users approach data privacy.

Baumer says as they proceed into the study, they’re finding there is so much to unpack in just studying the empirical phenomenon. Now he believes that’s where they will focus the majority of their work.

Much like his work with the journalism and legal nonprofits, Baumer is thinking about how people who don’t have an expertise in computing believe these systems work.

“If a system is going to guess your age and gender, and target products or political advertisements or whatever based on those things, how do people think that those systems work and how are those folk theories [which use common instances to explain concepts] and mental models evidenced by the particular privacy-preserving strategies that people take?” Baumer asks. “On the one hand there’s an empirical question: What are the strategies that people are doing in response to this threat? And then there’s the more conceptual or theoretical question: What are the mental models and folk theories that give rise to those strategies?”

In terms of broader impact, Baumer believes there’s a real challenge in algorithmic privacy that goes beyond digital or privacy literacy—he says there is a phase shift in the way people need to think about privacy. Baumer says it’s not just how privacy is regulated and systems are designed, but also how people interact with systems. He also suggests privacy policies should disclose what is being inferred from people’s provided data rather than just what information is being collected.

Collective Privacy Concerns

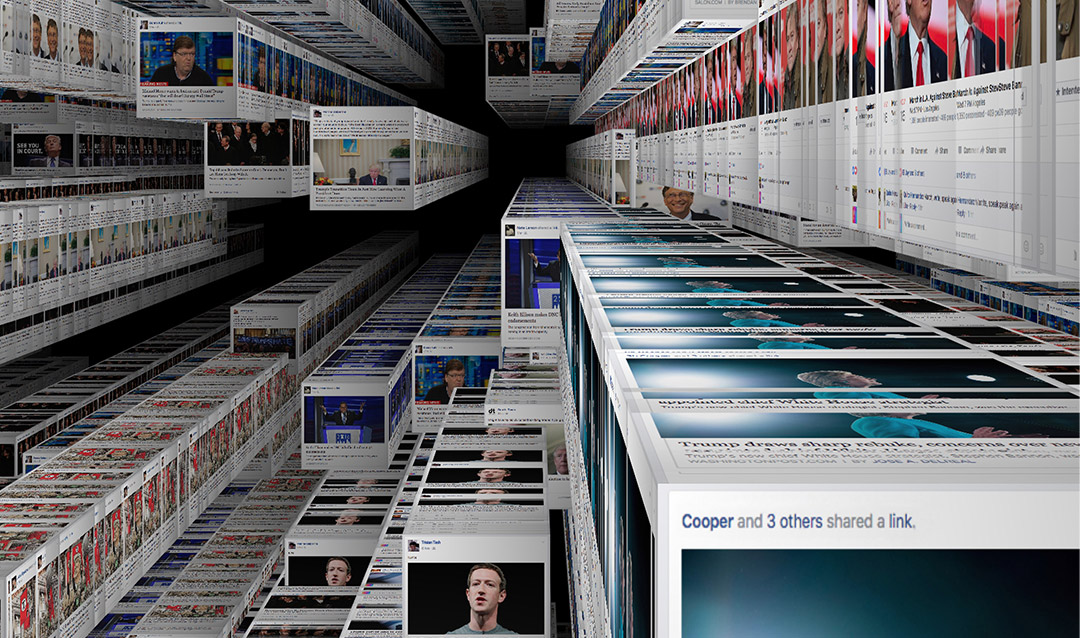

In a world in which technology gets more intertwined in everything we do, it’s becoming increasingly difficult for individuals to manage their privacy.

And while privacy on an individual level is what Haiyan Jia says typically comes to mind first when most think about protecting data in the digital age, there are also social and collective aspects of privacy to consider.

Jia, an assistant professor of data journalism, uses as an illustration the scenario of posting online a picture of a group of people hanging out. While this situation brings up similar privacy concerns to that of an individual—a photo, even if deleted, could be saved and duplicated by others once it’s shared online—it becomes more complex because not everybody in the photo has a say in whether it’s shared.

“You might look great, but other people might be yawning in that picture, so they might hate for it to be published online,” Jia says. “The idea of privacy being individualistic starts to be limiting because sometimes it’s actually that the group’s privacy is a collective privacy.”

In order to study the collective privacy of online social activist groups, Jia and Eric Baumer teamed up with students through Lehigh’s Mountaintop Summer Experience, which invites faculty, students and external partners to come together and take new intellectual, creative and/or artistic pathways that lead to transformative new innovations. The project targets exploration of new possibilities for collaborative privacy management, developing new understanding, making new discoveries and creating new tools.

Another example Jia provides is to look at the issue as though it’s a sports team in a huddle. In the huddle, players are exchanging information that pertains to each of them but also the team as a whole. Although at the same time, they’re trying to keep the other team from learning that information.

“There’s this information that they want to retain,” Baumer says of Jia’s analogy. “What we’re trying to do is understand—[by] looking at a bunch of different examples—the strategies that people use to manage privacy collectively, or information about a group. How does the group regulate the flow of that information?”

In the Mountaintop project, students reached out to and conducted interview studies with a number of local activist organizations, such as feminist, political, charitable and student groups varying in age, gender and goals. They collected the types of information the groups share and what the groups do to decide what information is shared only within the group versus beyond the group. They also surveyed to find what strategies the groups used to make sure information they wanted to keep private was not revealed.

So far, it’s been interesting to see how different groups view their privacy differently, Jia says. Some are more open with privacy in order to attract more members and make a larger impact. Groups who want to be more influential have to sacrifice some of their privacy. Jia says the goal of other groups is to find people who share their social identity or to have a safe space to exchange ideas. Those groups would adopt rules that are more strict to protect privacy.

“We find that to be really interesting—how groups function as the agent, to some extent, to allow individual preferences in terms of expressing their ideas and their needs,” Jia says. “But at the same time, individuals would collaborate together to maintain the group boundary by regulating how they would communicate about the group, within a group and beyond the group.”